Step #5: Identify data collection methods and analysis strategies.

As the program’s effectiveness can be judged through the process of data collection and analysis, we must identify the methods to collect data and later analyze this information to improve future implementation. According to Bresin, Galley and Thompson, “data collection methods and systems utilized to track program progress and reporting details to grantors according to grant requisites” (Sierra Thompson, 2016) is the process we will follow. This framework will be a breakdown of collection methods, program data and more precise categories of data.

First, we will focus on impact evaluation and direct sources of data collection. As stated by UNICEF Innocenti, “the purpose of impact evaluation is to measure impacts and understand to what extent these can be attributed to the program or policy so that judgement can be made about the program or policy’s value” (UNICEF Innocenti, 2014). This will involve observations of students throughout the program of the Brain Fit Super Powers. As previously mentioned, students will receive program implementation through morning meetings, announcements, monthly assemblies and bulletin boards. We will first take an initial survey of the students participating in this program. This survey or questionnaire will be based on questions identifying if students know specific coping strategies when feeling escalate. This was a key focus for our staff when including them in the school planning as an inquiry process and felt it must be particularly targeted. After the initial survey, a form of quantitative data, we could graph to see the representation of students’ knowledge of this area of social and emotional learning. As stated in How to Analyze Research Data, “quantitative data must be interpreted because numbers do not speak for themselves. Data analysis is a very important step in the process” (Gallagher, 2013). During this stage of direct source collection, we will randomly conference with students during the implementation of the program to see the acquiring of knowledge. This information will be more qualitative as the answer will not be number based. For example, when you feel frustrated, anxious, or angry, what are some strategies you can use to better find yourself in the optimal learning zone of regulation (i.e., green zone)? Additional observations may be made, and not random, when examining particular groups who have been noted to escalate quickly prior to the implementation of the program. Bresin, Galley and Thompson mention, “observing the program’s participants or how the program functions: a properly trained researcher or outside evaluator will conduct the observation, whether in an organized or informal setting. This should be done over time to ensure the observations are consistent” (Sierra Thompson, 2016). Self and peer assessments and exit slips will also be utilized to collect data from direct sources to acquire more information. This data will be a reflection of oneself after an activity that would be feelings evoking (i.e., math quizzes). Final questionnaires and surveys will most definitely be used to gather summative data (quantitative) and identify patterns and or changes from the initial survey.

When identifying archival data for this particular program, we would have access to previous years’ reporting. For instance, evaluators may identify the students within our Blended Learning Program, organize their previous school year’s report cards, and randomly select students from this bank of reports to identify observational comments (qualitative) made on term progress reports and summative reports. According to Louis, Manion, and Keith, “there is no one single or correct way to analyze and present qualitive data” (Cohen, Manion & Morrison, n.d.). When reporting on learners, it is quite common for teachers to write a “Teacher Reflection” that is not entirely related to academics. In fact, this portion of reporting is directly connecting to core competencies that has highlighted importance of social and emotional learning. Additionally, in our district, we are asked to complete two interim reports that uses core competency language about their self-regulated progress. This could be used by the evaluator (qualitative) throughout the process. This form of data collection would fall under Bresin, Galley and Thompson’s example of “What data are already available?” (Sierra Thompson, 2016). For the example provided above, we would identify this as records and communications. Furthermore, students who may have individualize educations plans, the evaluator would have access to this documentation, strategies and objectives for teaching staff to use. Even students with Ministry behavioural designations (i.e., moderate or intensive) would be used as qualitive data collection.

And finally, indirect sources of data collection would be needed. For this particular example, we would be gathering data from outside sources of the affected students. Therefore, the evaluator would have to interview parents of students, caregivers (i.e., grandparents, babysitters), after school care providers, and even coaches and outreach workers who support children outside of school hours. In our particular context, we would interview parents and outreach workers as it would be time appropriate, financially viable and would be easy access for an evaluator as these individuals are often on site.

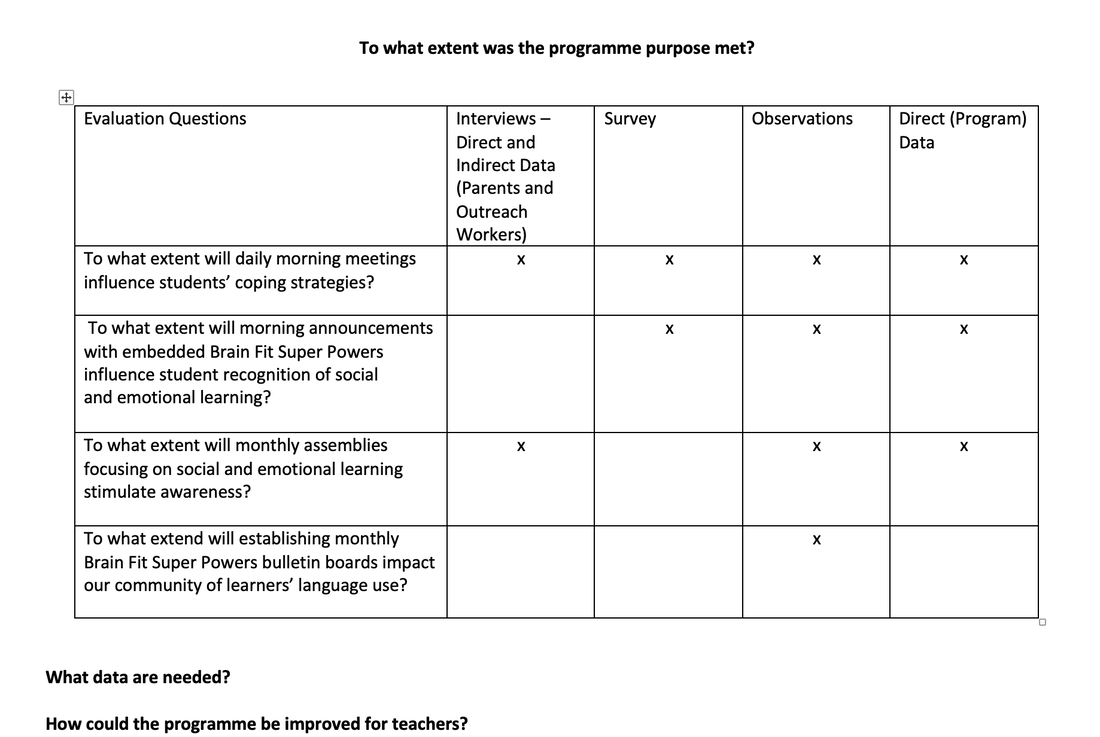

To fully support this process of data collection, as indicated by UNICEF Innocenti, we will construct a matrix identifying initial evaluative questions and ensure nothing is missed in our data collection approach. It is also mention by UNICEF Innocenti, all of the methods of collection must be doable (UNICEF Innocenti, 2014). This has been considered throughout the evaluation design plan, and is reflected in the above. Each of these forms of collection will be completed almost solely onsite, with individuals who are within our observation (direct or indirect), and utilize qualitative and quantitative sources, and will not add unnecessary costs or resources aside from time after school hours.

As previously mentioned, the data will be analyzed in a variety of ways. According to SpeakUp: How to Analyze Research Data,

“Data analysis involves reviewing the data to answer the research question and understanding its significance. It means studying connections, patterns and trends (as well as exceptions and unique cases) in the data. To get a good sense of the overall picture, it is good practice to review all your data at least once and not consider only those items that seem the most interesting” (Gallagher, 2013).

We will gather initial and post surveys to graph and identify patterns and changes. The outliers will also be considered and compared to other data. Observations will be taken and utilized in the process to identify if random and specific students are utilizing the strategies provided (i.e., naming one’s feelings, walking away, deep-breathing or counting backwards, positive self-talk). Additionally, evaluators will gather previous years reporting (interim, progress and summative), any IEPs available, Ministry designations and psycho-educational assessments. By examining the data provided, evaluators will use a matrix to ensure questions are fully answered and an identification if the purpose of the program has been satisfied. Moreover, the final evaluation should act as a vehicle for change and improvement. The data gathered and analysis should point to an opportunity to change the self-regulation of students and their use of strategies in the learning environment. As this program has not been implemented prior to this evaluation, we would not be evaluating how to improve the program for years of applications, more to see what could be done in future execution.

Finally, as the data collected will be essential for change, there will be a significant amount. For this context, there will only be four Blended Classes that will be utilizing this program. In each of these classes, there is an average of 12 students per class. As this is not an outstanding number of students to gather feedback from, the overall analysis of data could be somewhat time consuming, but in comparison to school-wide programming, it will be much more cost effective prior to a whole implementation.

As the program’s effectiveness can be judged through the process of data collection and analysis, we must identify the methods to collect data and later analyze this information to improve future implementation. According to Bresin, Galley and Thompson, “data collection methods and systems utilized to track program progress and reporting details to grantors according to grant requisites” (Sierra Thompson, 2016) is the process we will follow. This framework will be a breakdown of collection methods, program data and more precise categories of data.

First, we will focus on impact evaluation and direct sources of data collection. As stated by UNICEF Innocenti, “the purpose of impact evaluation is to measure impacts and understand to what extent these can be attributed to the program or policy so that judgement can be made about the program or policy’s value” (UNICEF Innocenti, 2014). This will involve observations of students throughout the program of the Brain Fit Super Powers. As previously mentioned, students will receive program implementation through morning meetings, announcements, monthly assemblies and bulletin boards. We will first take an initial survey of the students participating in this program. This survey or questionnaire will be based on questions identifying if students know specific coping strategies when feeling escalate. This was a key focus for our staff when including them in the school planning as an inquiry process and felt it must be particularly targeted. After the initial survey, a form of quantitative data, we could graph to see the representation of students’ knowledge of this area of social and emotional learning. As stated in How to Analyze Research Data, “quantitative data must be interpreted because numbers do not speak for themselves. Data analysis is a very important step in the process” (Gallagher, 2013). During this stage of direct source collection, we will randomly conference with students during the implementation of the program to see the acquiring of knowledge. This information will be more qualitative as the answer will not be number based. For example, when you feel frustrated, anxious, or angry, what are some strategies you can use to better find yourself in the optimal learning zone of regulation (i.e., green zone)? Additional observations may be made, and not random, when examining particular groups who have been noted to escalate quickly prior to the implementation of the program. Bresin, Galley and Thompson mention, “observing the program’s participants or how the program functions: a properly trained researcher or outside evaluator will conduct the observation, whether in an organized or informal setting. This should be done over time to ensure the observations are consistent” (Sierra Thompson, 2016). Self and peer assessments and exit slips will also be utilized to collect data from direct sources to acquire more information. This data will be a reflection of oneself after an activity that would be feelings evoking (i.e., math quizzes). Final questionnaires and surveys will most definitely be used to gather summative data (quantitative) and identify patterns and or changes from the initial survey.

When identifying archival data for this particular program, we would have access to previous years’ reporting. For instance, evaluators may identify the students within our Blended Learning Program, organize their previous school year’s report cards, and randomly select students from this bank of reports to identify observational comments (qualitative) made on term progress reports and summative reports. According to Louis, Manion, and Keith, “there is no one single or correct way to analyze and present qualitive data” (Cohen, Manion & Morrison, n.d.). When reporting on learners, it is quite common for teachers to write a “Teacher Reflection” that is not entirely related to academics. In fact, this portion of reporting is directly connecting to core competencies that has highlighted importance of social and emotional learning. Additionally, in our district, we are asked to complete two interim reports that uses core competency language about their self-regulated progress. This could be used by the evaluator (qualitative) throughout the process. This form of data collection would fall under Bresin, Galley and Thompson’s example of “What data are already available?” (Sierra Thompson, 2016). For the example provided above, we would identify this as records and communications. Furthermore, students who may have individualize educations plans, the evaluator would have access to this documentation, strategies and objectives for teaching staff to use. Even students with Ministry behavioural designations (i.e., moderate or intensive) would be used as qualitive data collection.

And finally, indirect sources of data collection would be needed. For this particular example, we would be gathering data from outside sources of the affected students. Therefore, the evaluator would have to interview parents of students, caregivers (i.e., grandparents, babysitters), after school care providers, and even coaches and outreach workers who support children outside of school hours. In our particular context, we would interview parents and outreach workers as it would be time appropriate, financially viable and would be easy access for an evaluator as these individuals are often on site.

To fully support this process of data collection, as indicated by UNICEF Innocenti, we will construct a matrix identifying initial evaluative questions and ensure nothing is missed in our data collection approach. It is also mention by UNICEF Innocenti, all of the methods of collection must be doable (UNICEF Innocenti, 2014). This has been considered throughout the evaluation design plan, and is reflected in the above. Each of these forms of collection will be completed almost solely onsite, with individuals who are within our observation (direct or indirect), and utilize qualitative and quantitative sources, and will not add unnecessary costs or resources aside from time after school hours.

As previously mentioned, the data will be analyzed in a variety of ways. According to SpeakUp: How to Analyze Research Data,

“Data analysis involves reviewing the data to answer the research question and understanding its significance. It means studying connections, patterns and trends (as well as exceptions and unique cases) in the data. To get a good sense of the overall picture, it is good practice to review all your data at least once and not consider only those items that seem the most interesting” (Gallagher, 2013).

We will gather initial and post surveys to graph and identify patterns and changes. The outliers will also be considered and compared to other data. Observations will be taken and utilized in the process to identify if random and specific students are utilizing the strategies provided (i.e., naming one’s feelings, walking away, deep-breathing or counting backwards, positive self-talk). Additionally, evaluators will gather previous years reporting (interim, progress and summative), any IEPs available, Ministry designations and psycho-educational assessments. By examining the data provided, evaluators will use a matrix to ensure questions are fully answered and an identification if the purpose of the program has been satisfied. Moreover, the final evaluation should act as a vehicle for change and improvement. The data gathered and analysis should point to an opportunity to change the self-regulation of students and their use of strategies in the learning environment. As this program has not been implemented prior to this evaluation, we would not be evaluating how to improve the program for years of applications, more to see what could be done in future execution.

Finally, as the data collected will be essential for change, there will be a significant amount. For this context, there will only be four Blended Classes that will be utilizing this program. In each of these classes, there is an average of 12 students per class. As this is not an outstanding number of students to gather feedback from, the overall analysis of data could be somewhat time consuming, but in comparison to school-wide programming, it will be much more cost effective prior to a whole implementation.

Step #6: Describe approach to enhance evaluation use.

As stated by Alkin and Taut, “evaluation use (or evaluation utilization) refers to the way in which an evaluation and information from the evaluation impacts the program that is being evaluated” (Alkin & Taught, 2003, 1). To better identify the how the evaluation will have an impact on the program, we must delve deeper into understanding the key methods of use.

The purpose of the Brain Fit Super Powers is to enhance each students’ mental health, which will result in improved overall well-being, emotional and physical health, and academic achievement. With this in mind, the key to evaluation is ensuring the use provides appropriate and valid input to better improve the program. As this particular program has never seen implementation at this context, it will be the preliminary experience. Yet, this experience and evaluation should provide reasonable feedback to advance the program. According to Weiss, author of Have We Learned Anything New About the Use of Evaluation? There are four main types of use that will benefit an evaluation. They are instrumental use, conceptual use, mobilizing support and influencing other institutions. Instrumental use is “when the evaluator does a good job of understanding the program and its issues, conducting the study and communicating results” (Weiss, 1998, 23). This aspect of evaluation use would be the quintessential version of use and evaluation. This form of use provides an accountability to the evaluator to provide data and analysis to better improve the program. As well, the additional factor would be the program stakeholders ensuring they consider this feedback the next time facilitating the process. In this context, our evaluation will have an impact on the program as we will gather results from the series of data acquired mentioned in the last section of module 3, and identify trends, patterns and outliers. Conceptual use is when program people “learn even more about the strengths and weaknesses and possible directions for action” (Weiss, 1998, 24). The third focus for use would be “mobilizing support”. Weiss states, “often program managers and operators know what is wrong and what they need to do to fix the program. They use evaluation to legitimate their position and gain adherents” (Weiss, 1998, 24). And finally, the last form of use is “influence on other institutions. Weiss mentions, “when evaluation adds to the accumulation of knowledge, it can contribute to large-scale shifts in thinking – and sometimes, ultimately, to shifts in action (Weiss, 1998, 24). Through the practice of these forms of use, continuous improvement of programs will occur. One particular piece that must be stated within our focus of social and emotional learning and evaluation use, is the emphasis on collaboration. As our process of school planning through inquiry was developed through collaboration and identifying a student populations biggest needs, collaboration should and must occur within evaluation use as well. Weiss states, “collaboration between evaluators and program staff all through the evaluation process tends to increase the local use of evaluation findings” (Weiss, 1998, 23). Therefore, these findings will assist the program and growth and necessary adaptations. As well, this collaboration should occur with evaluators who are familiar with content and context. Shulha and Cousins, authors of Evaluation Use: Theory, Research and Practice, ascertain “the evidence suggests that the more evaluators become schooled in the structure, culture, and politics of their program and policy communities, the better prepared they are to be strategic about the factors most likely to affect use” (Shulha & Cousins, 1997, 203). Through these methods of use, as well as collaboration and “schooling”, our evaluation should provide for an impact on program implementation.

Step #7: Commitment to Standards of Practice

As an evaluation plan is designed as a framework to conduct a program evaluation, it is even more focused on criteria and standards than a program rationale. By examining our program and evaluation plan, we can identify how it follows the Standards of Program Evaluation. According to Chen (2011), program plans focusing on improvement must be comprised of visible standards to be met by participants prior to them being involved in the target population (Chen, 2011, 12). Any program or evaluation plan will be comprised on implementing organization, program implementers, associate organizations and community partners, ecological context, intervention and service delivery protocols, and the previously mentioned target population. In our particular evaluation plan, the implementing organization would be our school and specific social and emotional team. The program implementers would be the blended learning teachers who would be facilitating the program who are provided with resources and training. The associate organizations and community partners would likely be our Ministry of Education with our guiding principles of social and emotional learning embedded in the core competencies. Our ecological context would be the monthly assemblies led by administration, the morning announcements focusing on the Brain Fit Super Powers monthly themes (i.e., self-regulation, gratefulness, empathy, etc.), and the bulletin boards located throughout the school by stakeholders planned advertising. Intervention relates to the continual focus of self-regulation, which in turn would focus primarily on a school code of conduct, indicating the non-negotiables that students must abide by (i.e., kind). Finally, our target population would be comprised of the students within the blended learning program at our elementary school. Each of these classes would be made up of a range of age groups, French and English programming, and families from a variety of socioeconomic backgrounds.

Throughout this evaluation plan, our goal will be to utilize our guiding questions and standards to maintain an ethical evaluation which may lead to improvement of the program for future students to learn from.

While examining the Program Evaluation Standards, we must identify the main categories of these standards and relate each to our evaluation of the implementation of the Brain Fit Super Powers. The standards are utilizing standards, feasibility standards, propriety standards, and accuracy standards. As stated in the Summary of Standards, “the utility standards are intended to ensure that an evaluation will serve the information needs of intended users” (Standards for Program Evaluation). The purpose of the program and evaluation will be to provide valuable feedback to stakeholders, but also identify if the program will eventually become school-wide approach to student intervention and support. This relates directly to the utility standards. According to the Standards for Program Evaluation, “The feasibility standards are intended to ensure that an evaluation will be realistic, prudent, diplomatic, and frugal” (Standards for Program Evaluation). In our context, the evaluation will have no expense other than the program printing itself, the assessment will be conducted by teachers who are currently implementing these program lessons daily, and the sensibility relates to the team’s decision on how to conduct each stage of the program. As this program and evaluation will not be related to the law, it is important to consider if it is ethical. The Summary of Standards state, “the propriety standards are intended to ensure that an evaluation will be conducted legally, ethically and with due regard for the welfare of those involved” (Standards for Program Evaluation). And finally, the accuracy standards are related to our evaluation in that it the evaluation itself will indicate if the program should be adjusted and continued, or another program should fill its place. Our program is one of many social and emotional programs available, and the accuracy standards will determine if it will its worth. Standards for Program Evaluation clarify, “the accuracy standards are intended to ensure that an evaluation will reveal and convey technically adequate information about the features that determine worth or merit of the program being evaluation” (Standards for Program Evaluation). It is through the use of these processes that this evaluation holds to the Standards for Program Evaluation.